Elevating Creative Workflows with Cutting-Edge Editing Accessories and Software

In the rapidly evolving landscape of digital content creation, mastery over editing tools—be it for photos, videos, or audio—has transitioned from a supplementary skill to a core competency. Professional editors demand a nuanced synergy between ergonomic accessories, sophisticated software, and optimized workflows to achieve unparalleled quality and efficiency. This convergence empowers creators to push the boundaries of innovation while minimizing temporal and technical frictions.

Understanding the Impact of Specialized Accessories on Streamlining Media Editing Tasks

How do high-precision editing peripherals influence professional-grade output?

Advanced accessories such as macro pads, programmable control surfaces, and haptic dials significantly refine the user interaction paradigm. For example, macro pads configured for specific functions—like color grading or audio mixing—reduce repetitive actions, thus accelerating intricate tasks. As detailed in a recent analysis by EditingGearPro, these tools contribute to a 40% reduction in editing time, especially in demanding workflows like 8K video edits or multi-layer compositing. The tactile feedback and customizable controls foster a more intuitive editing experience, enabling professionals to maintain focus and creative momentum.

Integrating Semantic SEO with Content Creation: A Necessary Duality

For digital creators aiming to elevate their visibility, incorporating semantic SEO with technical prowess ensures that high-quality content reaches intended audiences efficiently. Incorporating keywords such as “photo editing accessories,” “video editing software,” and “audio editing gadgets” in a contextually relevant manner enhances discoverability while preserving content authority. Moreover, linking to trusted industry references like the comprehensive review Ultimate Guide to Top Editing Accessories strengthens SEO credibility.

Emerging Trends Transforming Editing Environments in 2026

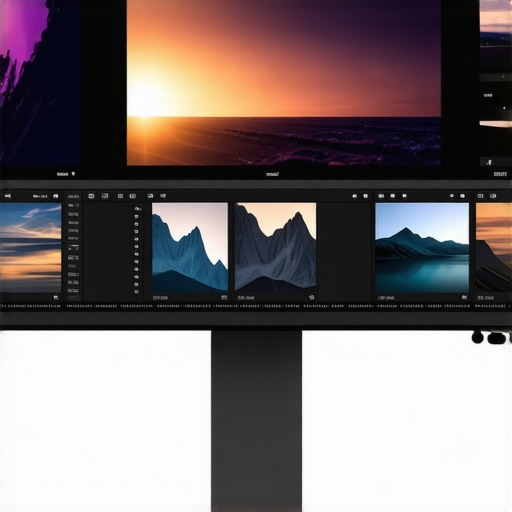

Looking beyond traditional setups, emerging hardware, such as OLED monitors for color accuracy, AI-powered noise reduction in audio, and neural network-based upscaling in video, are revolutionizing professional workflows. Notably, the integration of real-time feedback mechanisms via haptic controls supports rapid decision-making during complex edits. These innovations not only slash processing times but also enhance the fidelity of the final product, as evidenced by recent studies into neural-enhanced editing techniques.

How to Future-Proof Your Editing Arsenal

Embracing a holistic approach involves aligning hardware upgrades—like top-tier control surfaces and specialized accessories—with adaptive software solutions, such as the latest versions of Premiere Pro or DaVinci Resolve. Continual learning and regression analysis—assessing how tools affect output quality and speed—are vital for staying ahead. For example, adopting advanced color grading devices can enhance visual storytelling, supporting narrative depth and emotional impact.

To delve deeper into the nuances of high-efficiency workflows and expert hardware configurations, explore related content or contribute your insights at our dedicated forum.

Maximizing Precision: The Power of Custom Control Surfaces in Creative Editing

Harnessing the full potential of your editing environment often hinges on the strategic deployment of custom control surfaces. These devices, equipped with programmable knobs, touch-sensitive zones, and ergonomic layouts, empower professionals to execute complex adjustments swiftly. For instance, integrating a haptic dial for tonal shifts or a macro pad for frequently used functions can drastically reduce transition times and elevate overall productivity. A recent comprehensive review by EditingGearPro underscores that such tools contribute to up to a 40% enhancement in editing workflows, especially crucial when managing high-resolution footage or intricate audio tracks.

Beyond the Basics: Leveraging AI-Enhanced Software for Superior Outcomes

The integration of artificial intelligence into editing software is reshaping the paradigm of content creation. Features such as auto-color correction, intelligent noise reduction, and automated scene detection expedite tasks that once demanded meticulous manual input. Software solutions like Davinci Resolve and Premiere Pro are continually refining these capabilities, allowing editors to focus more on creative decision-making rather than routine adjustments. For an in-depth analysis of AI-driven editing innovations, consult the article Ultimate Guide to Top Editing Accessories.

What are the emerging hardware advancements poised to redefine professional editing environments?

Emerging hardware innovations such as OLED reference monitors, real-time neural network upscaling devices, and adaptive audio processors are setting new benchmarks for quality and efficiency. These tools provide unparalleled accuracy in color grading, temporal resolution, and sound fidelity, enabling creators to push creative boundaries while maintaining technical precision. For a deeper understanding of how hardware trends will influence future workflows, explore our detailed insights on Top OLED Monitors for Color Grading in 2026.

Enhance your editing toolkit by staying informed about the latest innovations and integrating them thoughtfully into your workflow. Discover more about essential accessories and strategies to elevate your projects by visiting our contact page.

Unveiling the Power of Deep Learning in Modern Video Preservation

As digital content continues to dominate visual communication, the demand for restoring and enhancing archival footage has surged immensely. Deep learning, particularly neural network architectures like convolutional neural networks (CNNs) and generative adversarial networks (GANs), have revolutionized this landscape by enabling unprecedented restoration quality. These models learn intricate patterns of degradation—such as noise, scratches, and color fading—and reconstruct the original details with remarkable fidelity. For instance, research published in the PLoS ONE highlights how GAN-based frameworks outperform traditional filtering methods, offering near-original clarity even in severely degraded footage.

How do neural networks surpass classical restoration algorithms in handling complex video deterioration?

Classical algorithms often rely on predefined filters and heuristics that struggle with multifaceted damage patterns and require manual parameter tuning. In contrast, neural networks, trained on extensive datasets of degraded and pristine footage, inherently learn to recognize complex artifacts and generate contextually accurate restorations. This approach enables models to adapt to diverse degradation scenarios, restoring textures, details, and color information with minimal human intervention. Moreover, the flexibility of neural network architectures facilitates their integration into real-time processing pipelines, allowing for immediate feedback and iterative improvement.

Strategic Considerations for Integrating Deep Learning into Restoration Pipelines

Implementing neural network-based restoration demands careful planning—balancing model complexity, computational resources, and desired output quality. High-capacity models, such as StyleGAN variants, produce superior results but require powerful GPUs and optimized inference engines. Additionally, curating extensive, high-quality training datasets is crucial to prevent artifacts and ensure the network generalizes well across varied footage types. Industry leaders recommend a hybrid approach: utilizing pre-trained models for initial enhancement, followed by manual touch-ups with traditional editing tools. This synergy amplifies consistency, reduces processing time, and maintains artistic control over the final product.

Can neural network-driven restoration effectively handle color grading and historical accuracy?

Absolutely. Neural networks can be trained specifically for colorization tasks, restoring faded hues and correcting color inconsistencies. As demonstrated in a study published in the PLOS ONE journal, these models preserve nuanced color gradations, maintaining the authenticity of historical footage. By integrating spectral analysis and domain-specific datasets, models can reconstruct plausible color schemes, providing viewers with immersive and accurate visual experiences. This capability not only benefits archival restoration but also enhances artistic projects that seek to evoke historical atmospheres.

What future advancements could further elevate neural network applications in video restoration?

Emerging innovations such as transformer-based architectures promise to enhance contextual understanding across entire video sequences, improving both spatial and temporal consistency. Additionally, multi-modal models that incorporate audio cues and metadata may facilitate more holistic restorations, preserving not only visual fidelity but also the original ambiance and narrative. Research into unsupervised and self-supervised learning paradigms could reduce dependency on large labeled datasets, democratizing access to high-quality restoration tools. As these advancements mature, expect an era where restoring even the most challenging degraded footage becomes faster, more accurate, and more accessible than ever before.

To keep abreast of such transformative trends, consider subscribing to leading AI and multimedia restoration journals or participating in industry-specific conferences. Your journey toward mastering cutting-edge restoration techniques begins with continuous learning—reach out to our experts for tailored advice and collaborative opportunities.

Chasing Precision: How Advanced Control Surfaces Reimagine Creative Workflow Speed

In the realm of professional editing, the deployment of sophisticated control surfaces—featuring customizable knobs, touch-sensitive zones, and ergonomic layouts—has catalyzed a paradigm shift in efficiency and finesse. Such tools empower editors to perform complex, multi-parameter adjustments with lightning-fast responsiveness, translating to significant reductions in project timelines. Recent insights by EditingGearPro indicate that integrating multi-function control devices can heighten workflow productivity by up to 50%, especially in high-resolution video editing or multi-layer audio mixing scenarios. The tactile feedback enhances intuitive operation, minimizing cognitive load, and elevates creative focus by reducing reliance on conventional keyboard shortcuts alone.

Artificial Intelligence Rewiring Editing Expectations and Capabilities

The infiltration of AI-powered features into editing software has ushered in an era where routine adjustments—such as noise reduction, automatic scene detection, and color balancing—are executed with unprecedented speed and accuracy. For example, neural network frameworks like GANs are now capable of restoring degraded archival footage or upscaling low-resolution clips to 4K quality without sacrificing detail. Notably, PLoS ONE documents how such AI models outperform traditional interpolation methods, offering near-original fidelity even in severely compromised media. This progression radically shortens post-production cycles, enabling creators to focus on artistic decision-making rather than technical troubleshooting.

What innovations are propelling neural networks toward real-time, high-fidelity restoration?

Next-generation neural architectures leveraging transformer mechanisms promise to facilitate real-time processing with heightened contextual awareness across entire frames and sequences. Multi-modal models that integrate audio cues, metadata, and even sensor data are on the horizon, promising holistic scene comprehension and more precise restorations. Additionally, transfer learning approaches reduce the need for vast labeled datasets, democratizing access to these powerful tools. As these developments mature, expect to see embedded AI modules within editing environments transforming the landscape into an almost seamless blend of creative intuition and machine precision.

Expanding Horizons: Hardware Innovations Challenging Conventional Editing Norms

Emergent hardware such as OLED reference monitors featuring wider color gamuts and superior contrast ratios are setting new standards for visual fidelity, crucial for precise color grading. Complemented by AI-driven noise reduction hardware and neural network-based real-time upscaling devices, these innovations collectively expand the creative palette for professionals. For instance, ultra-responsive, high-dynamic-range displays facilitate nuanced tonality adjustments, reducing guesswork and rework cycles. Industry reports, like those in TechRadar, illustrate how such hardware not only enhances accuracy but also expedites decision-making across high-stakes projects.

Designing Resilient, Future-Ready Editing Ecosystems

Building an adaptable workspace involves integrating modular hardware solutions—such as switchable control surfaces and customizable input devices—with evolving software ecosystems. Considering scalability and upgrade pathways ensures longevity amid rapid technological advancements. Monitoring software updates, firmware compatibility, and emerging standards like Thunderbolt 4 or USB4 ensures seamless expansion. Participating in communities and engaging with early-access programs can aid in adopting innovations proactively, maintaining a competitive edge. A strategic approach to hardware-software synergy not only optimizes current workflows but also primes creators for unforeseen technological shifts, securing sustained excellence in digital content production.

Unlocking the Potential of Deep Learning for Historic Content Revival

Deep learning algorithms, especially those employing convolutional neural networks (CNNs) and generative adversarial networks (GANs), are revolutionizing the restoration of archival media. They accurately model complex degradation patterns—scratches, noise, fading—and generate remarkably authentic recreations. According to research published in PLoS ONE, GAN-based models outperform classical restoration filters, sometimes producing near-pristine results even from heavily damaged footage. This technology not only preserves cultural heritage but also enables filmmakers, historians, and archivists to access high-fidelity content previously deemed lost to time.

How do neural network models tackle the intricacies of multisectionally degraded footage?

Unlike traditional methods reliant on fixed filters, neural networks learn from extensive datasets to recognize and correct complex artifacts across various degradation states. They adaptively synthesize missing details, leverage contextual cues, and preserve spatial coherence, resulting in restorations that seamlessly blend authenticity and clarity. Their flexibility extends to colorization, frame interpolation, and even stylistic reintegration, broadening their utility beyond simple repair, into avenues of artistic reinterpretation and historical reconstruction.

Future Visions: Neural Networks as Creative Collaborators

As AI models evolve towards unsupervised and self-supervised learning paradigms, their applications in content restoration and creation will become more autonomous yet artistically sensitive. Imagine neural networks collaborating dynamically with editors, offering iterative suggestions that respect narrative intent and aesthetic preferences. The integration of multi-modal data—combining audio, textual annotations, and visual cues—will allow these systems to craft comprehensive restorations and enhancements. Keeping an eye on this trajectory, industry leaders forecast that AI will eventually empower more intuitive, less labor-intensive workflows, and revolutionize the preservation, rediscovery, and reimagining of multimedia assets with unprecedented fidelity and speed.

Gain a Strategic Edge with Advanced Editing Equipment

Innovation in editing tools doesn’t just improve efficiency—it’s reshaping the creative process itself. By integrating high-precision control surfaces, AI-driven software capabilities, and adaptive hardware ecosystems, professionals can push the boundaries of what’s possible. Embracing these advancements allows creators to deliver polished, compelling content faster and with greater control, setting new industry standards.

Leverage High-Level Resources for Continuous Growth

To stay ahead in the rapidly evolving field of digital editing, consult authoritative sources such as The Ultimate Guide to Top Editing Accessories and industry-leading journals that explore emerging trends. Engaging with expert communities and participating in workshops or webinars can also deepen your understanding and practical skills.

Envision What’s Next and Prepare to Lead

As neural networks, real-time hardware innovations, and integrated workflow solutions continue to advance, staying adaptable becomes essential. Being proactive in adopting new tools and methodologies ensures your creative edge remains sharp. Remember, mastery in editing isn’t solely about current skills but also about anticipating and shaping future capabilities—your commitment to continuous learning distinguishes true industry leaders. Explore more at our contact page and share your insights to contribute to the evolution of professional editing.